27 Apr 2026

tl;dr

- built a wrapper in go with a collection of sandboxing tools (bubblewrap, seccomp, et al) in order to contain various coding LLM cli tools: cwage/agentpen

- forced (well, encouraged) its usage on my nix laptop so that invoking

claude or codex actually executes it in this sandboxed tool

why?

When these LLM coding cli tools first emerged, I was cautious and skeptical. It didn’t take long for me to start being impressed, and eventually wanting to see what these things could truly do beyond trivial coding exercises.

cracks knuckles: --dangerously-skip-permissions

Using these tools without guardrails is incredibly impressive. Being able to fire up claude and be like “hey i think my jellyfin client is wedged playing The Magnificent Ambersons – can you ssh into the jellyfin container and make sure GPU passthrough is still working?”, and then watching it proceed to use my local deploy key to ssh in and do the needful is truly impressive. And terrifying. It’s all fun and games for me to YOLO with these tools on my own homelab or whatever, where I accept the risks (of both destructive actions and secrets exfiltration). But in a scenario where I’m running these tools at a company, in a project that touches many sensitive things? Claude’s uncanny ability to derive how to get access to what it needs (all while sending a firehose of any/all information it digs into in the meantime) is actually quite horrifying.

6 months later, i figured it was time to swing the pendulum the other direction and see what it’s like using these tools when you don’t trust them at all. claude and codex both provide their own sandbox options (which is good!), but even they historically haven’t been foolproof – and anthropic’s cli tool source is still weirdly obfuscated/not entirely OSS (despite being leaked). I wanted to build something that doesn’t really trust anyone involved – much like you’d sandbox actively malicious code (sortof).

Continue reading →

14 Apr 2026

tl;dr

- I used claude code to build an android app that lets two people prove they’re talking to each other using shared, rotating codes that only their devices can generate for identity confirmation

- repo: https://github.com/cwage/whoarewe

- OSS: MIT license

- It’s not on the play store, and probably never will be, unless I can convince a handful of friends and family to at least try it, much less use it.

- To try it: download the signed APK from the releases on the above github repo and either enable “Install unknown apps” in your Android settings and open it, or sideload it with adb install. If you don’t trust this (as you shouldn’t), but maybe kind of trust me, you can clone the repo, inspect the code and build it yourself. Have clippy review it!

The problem

Say that you get a phone call from a loved one. They’re panicked, crying, saying they’ve been in a car accident. They’re freakin out, you’re freakin out. They need money, now. It sounds exactly like them. What do you do? In a high pressure situation, there’s often not time (real or perceived) to establish verifiable identity confirmation. (“Tell me something only you’d know!”). People are often at their least rational or defensive in situations like this, and scammers are increasingly good at engineering precisely these situations to get your guard down. Stuff like this is starting to happen, with success.

I’m a pretty skeptical guy, and a year ago, I’d have rolled my eyes at this. Surely you can tell a fake voice from a real one, right? Turns out: not so much. Some studies put human detection of deepfake audio at roughly 48% accuracy (I can’t vouch for the methods and scientific rigor here, but seems plausible). Modern voice cloning tools need as little as a few seconds of sample audio, cost a pittance, and the source material is stuff that’s already out there for anyone with a modest public presence: earnings calls, conference talks, social media clips. Some examples:

- AI-generated Biden robocall hit NH primary voters in 2024. Cost about a dollar to produce, took under 20 minutes.

- Arup, $25.6M stolen – finance employee joined a video call where the CFO and several colleagues were all deepfakes. 15 wire transfers before anyone noticed.

- Ferrari exec targeted by a deepfake of CEO Benedetto Vigna’s voice – accent and all. Got suspicious, asked a personal question the caller couldn’t answer. He was lucky.

Even before the advent of AI-supercharged techniques, social engineering scams were already bilking people out of their life savings regularly. The models are getting better and the detection tools (especially outside a lab) are getting worse.

The uncomfortable truth is that voice and video are no longer reliable signals of identity. They used to be (to an extent), not because they were cryptographically secure, but because faking them was hard. That’s no longer the case, so what do we do?

Continue reading →

08 Apr 2026

Throughout my dalliances with astrophotography, I was forced to get familiar with platesolving in a hurry. Platesolving, in a nutshell, is a process by which you can take a photo of the sky and figure out what part of “the sky” you’re looking at. astrometry.net is a set of tools to do this platesolving, which you can both do locally (if you download all the datasets) or with their web service. It’s a useful tool, but it’s also just kinda fun. Any time I see a photo with visible stars, I’m always tempted to run it through astrometry to see if can solve and annotate the stars/objects in the view. For example, here’s a photo of spacex starship’s second integrated test that i ran through astrometry:

Continue reading →

09 Jun 2025

Obligatory Disclaimer

I am not a doctor. This is not medical advice. Consult with your doctor before attempting anything mentioned below. This is a summary of my experience and advice for what you should do after you totally talk to your doctor first. It’s possible I got some details wrong. It’s possible many conclusions from my samplesize n=1 anecdotal experiences are wrong. Feel free to correct me if so! And talk to your doctor.

What is This?

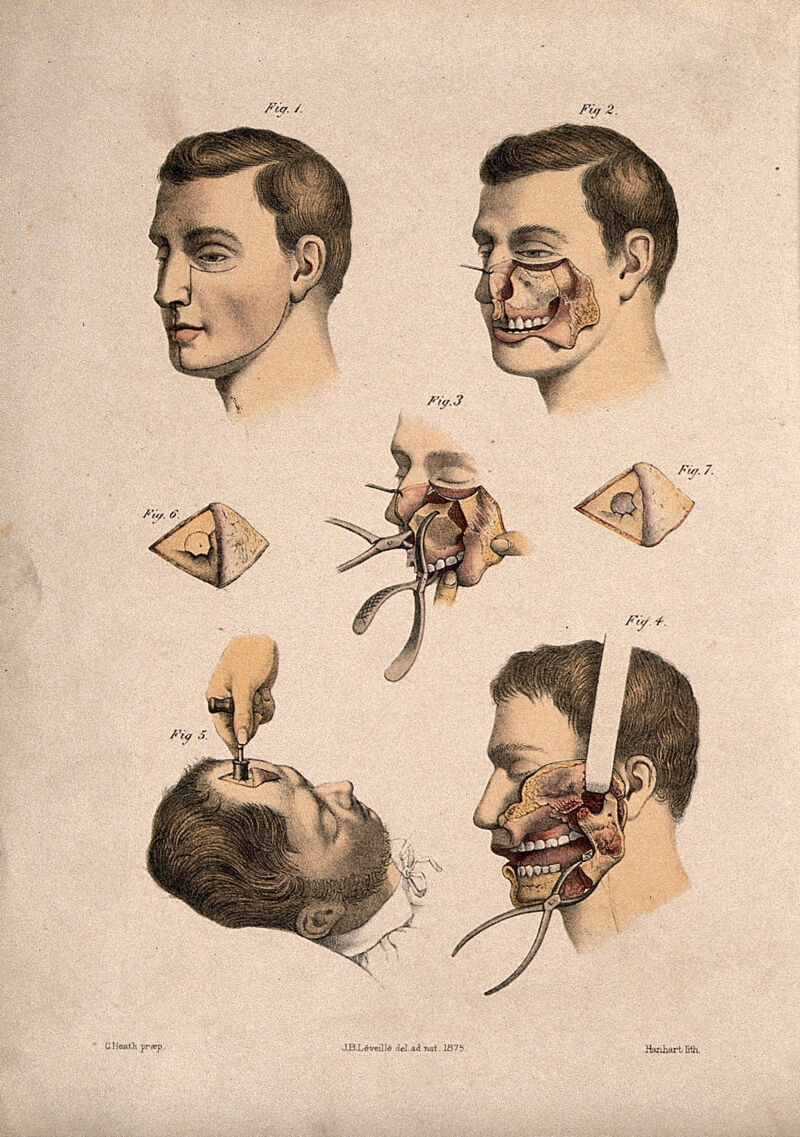

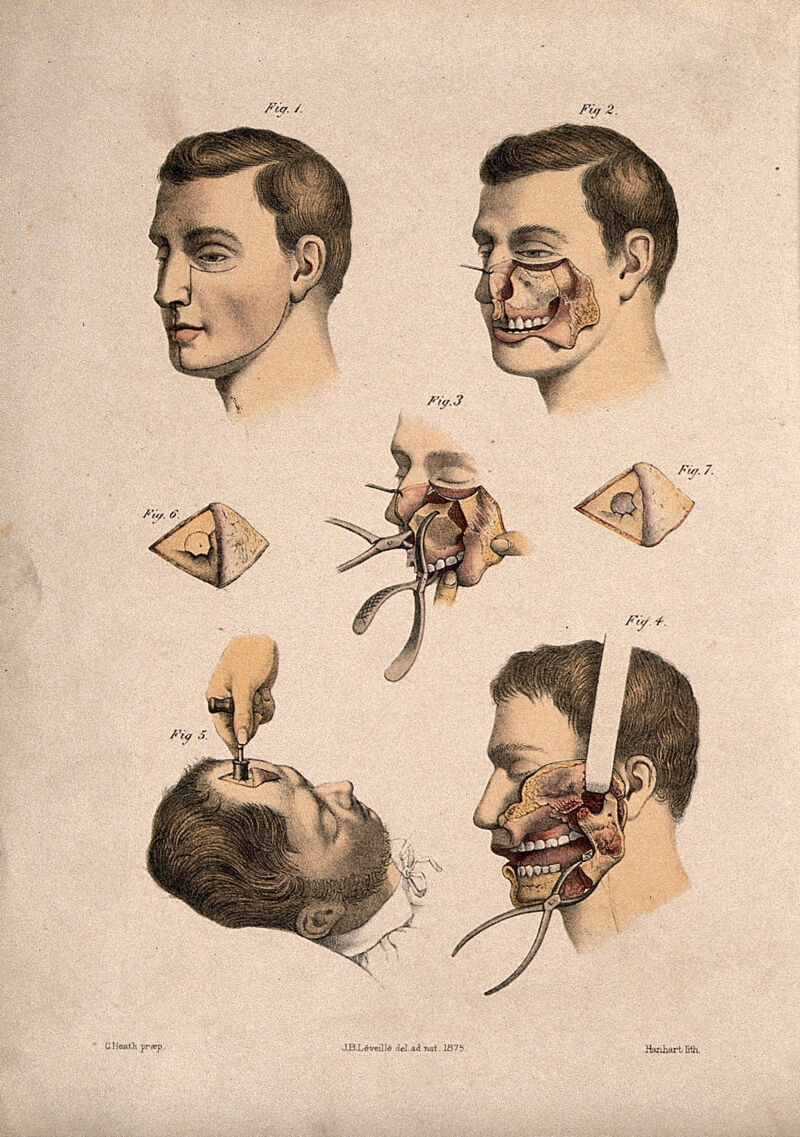

In short, this is an attempt to summarize some techniques for dealing with uncooperative sinuses and immune systems resulting in chronic sinusitis, but also applicable to more subtle “allergies” as well. A number of friends/family have had problems like this. I’ve had them particularly badly over the course of my life. None of this is foolproof, and I don’t always even abide by my own advice and still deal with recurring (now, much more minor) problems like sinus infections.

Continue reading →

03 Feb 2022

Over the years, I’ve come to appreciate the subtleties of braising, but I frequently run across people who are confused about what, exactly, braising is – and for many years, so was I. What is the common description of braising? “meat cooked slow in liquid”. But what does that mean? Is cooking meat in liquid slow in a pot on the oven braising? (spoiler: no) Is slow-cooking meat in liquid in a pot in the oven braising? (spoiler: also no, mostly). A quick explanation of my understanding of what braising is, why you should do it, and how it’s superior to some other methods. If you want the quick tl;dr: braising is only braising when there’s convection involved. Explanation below:

Continue reading →